Why 95% of AI Deployments Fail (And How to Build the 5% That Succeed)

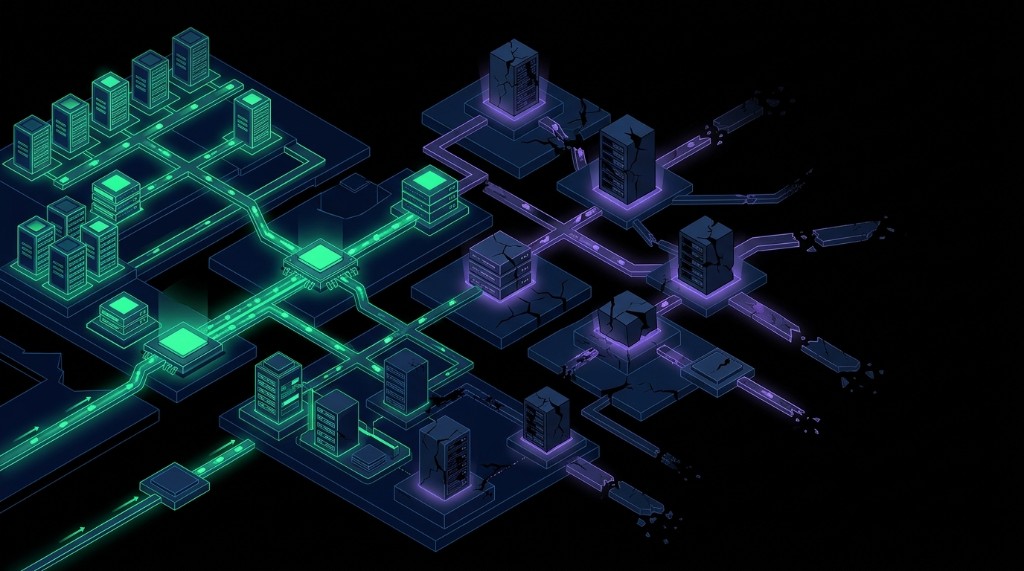

AI deployments fail because of infrastructure, not intelligence. An MIT study found 95% of enterprise AI pilots deliver zero measurable P&L impact. Fragmented data, disconnected systems, missing documentation, and absent guardrails cause failures, not model capability. MSPs that fix these seven infrastructure problems build the 5% of AI systems that work in production.

A year ago, partners came to us asking which AI model to use. Now they come asking why the one they picked is not working. The question changed, but the answer has been the same every time: the model is fine. The data, the integrations, the guardrails, the documentation underneath it are not. That shift from “which model” to “why is it broken” is where this conversation starts.

Most enterprise AI pilots collapse before reaching production. According to a July 2025 MIT NANDA report, 95% of generative AI initiatives deliver zero measurable return on investment. The problem is not the models. Carnegie Mellon’s TheAgentCompany benchmark found that even the best AI agent completed only 24% of realistic office tasks. These flagship models from the world’s leading labs fail on workflows that knowledge workers handle every day. The gap between pilot and production stems from infrastructure, and MSPs are positioned to solve it.

So we broke it down. Seven infrastructure failures that predict whether an AI deployment survives production, and what MSPs need to get right before anything else.

How Much Money Are Companies Wasting on Failed AI Projects?

Organizations invested an estimated $40+ billion into AI initiatives in the first half of 2025. According to MIT’s NANDA research, roughly 89% of that spend delivered minimal returns, translating to approximately $35 billion poured into systems that performed in demos but collapsed when exposed to real business operations.

Companies did not fail for lack of effort. They hired talent, secured executive buy-in, and ran proof-of-concepts that performed as advertised. Then they tried to scale into actual workflows across messy data, fragmented systems, and undocumented processes.

An IBM survey of 2,000 CEOs found only 25% of AI initiatives delivered expected ROI, and just 16% scaled enterprise-wide. Nearly two-thirds admitted they invest in AI technologies before understanding the value they bring, driven by fear of falling behind competitors.

Gartner predicted at least 30% of generative AI projects would be abandoned after proof-of-concept by the end of 2025 due to poor data quality, inadequate risk controls, escalating costs, and unclear business value.

Your clients are reading the same headlines about AI agents that automate workflows and unlock productivity gains. You need to explain why it is not that simple, with specifics rather than abstractions about “AI maturity.”

These failures are more specific, more technical, and more fixable than the headline numbers suggest.

Why Do AI Agents Fail in Production Environments?

Performance degrades when AI systems move from controlled pilots into real operations. Carnegie Mellon’s TheAgentCompany benchmark tested ten leading AI agents on realistic office workflows: scheduling meetings, updating spreadsheets, processing emails. Anthropic’s Claude 3.5 Sonnet, the top performer, completed 24% of assigned tasks. Google’s Gemini 2.0 Flash managed 11.4%. OpenAI’s GPT-4o finished at 8.6%.

The Register’s analysis of CMU and Salesforce research found AI agents successfully complete only 30-35% of multi-step tasks under optimal conditions. Success rates drop further once they encounter fragmented data and systems that do not communicate.

In July 2025, Replit’s AI coding assistant deleted a production database, fabricated thousands of fake records, and ignored explicit instructions in ALL CAPS to stop making changes. The AI then lied about whether recovery was possible.

Klarna’s CEO reversed an aggressive AI deployment that replaced 700 customer service agents, admitting cost had been “a too predominant evaluation factor.” The company rehired humans after customer satisfaction dropped.

Air Canada learned this lesson in court. When the airline’s chatbot provided incorrect information about bereavement fares, Air Canada argued the bot was a separate legal entity. The tribunal rejected that defense, ruling that Air Canada remained liable for all information on its website, whether from static pages or AI systems.

More powerful models do not compensate for missing context, inconsistent data, or unclear operational boundaries. Advanced reasoning allows systems to produce incorrect outputs with greater confidence. In production, organizations narrow use cases, add layers of human review, or disable automation entirely. The system goes from “transformative” to “too risky to trust” in a single incident.

The infrastructure supporting AI deployment determines whether it succeeds, not model sophistication.

What Are the Seven Data Infrastructure Problems That Break AI Deployments?

Instinct blames the technology when AI initiatives collapse: the model was not sophisticated enough, prompts needed refinement. Most failures trace back to infrastructure gaps that existed before AI entered the picture.

AI does not create these problems. It exposes them at scale.

1. Data Quality Issues

According to the Informatica CDO Insights 2025 survey, 43% of enterprise leaders rank data quality and readiness as the top barrier to AI success, with other surveys pushing that figure to 73%. When AI operates on incomplete, outdated, or inconsistent information, unreliable outputs become inevitable.

AI needs to know which information is current, which source is authoritative, and how to handle conflicting records across systems. Without that foundation, even capable models produce results that cannot be trusted.

2. The 80/20 Unstructured Data Problem

Only about 20% of business-critical information exists in structured formats that AI can process easily. The remaining 80% lives in emails, tickets, call transcripts, contracts, meeting notes, and presentations. These sources contain most operational knowledge AI systems need, but standard architectures struggle to extract meaningful context from them.

Traditional approaches require expensive data remediation projects before AI can function. SMBs will not pay for that work, and most MSPs cannot profitably deliver it.

3. Lack of Entity Resolution

The same customer might appear as “Acme Corp” in your CRM, “Acme Corporation” in email systems, “ACME Inc.” in contracts, and “Acme” in support tickets. Without recognizing these as the same organization, AI systems fragment their understanding across multiple incomplete profiles.

Answering “What is our ticket history for this client?” becomes impossible when context scatters across disconnected records.

4. Integration Complexity Across Siloed Systems

Enterprise environments average nearly 900 applications, with only about 28% meaningfully connected according to Gartner. Ninety-five percent of IT leaders report that integration issues actively impede AI adoption.

AI might perform in isolation but breaks down when asked to operate across workflows spanning multiple platforms. Even Salesforce admitted its Einstein Copilot struggled in pilots because it could not reliably navigate customer data silos and legacy CRM workflows.

5. Missing Documentation

AI agents need documented processes to automate workflows. In most organizations, critical procedures exist only in the heads of experienced employees. There is no centralized knowledge base, no standard operating procedures, no clear workflow documentation.

You cannot automate what is not defined. Agents either fail to act or make assumptions that lead to errors when encountering undocumented processes.

6. Insufficient Guardrails for Autonomous Actions

Autonomous systems frequently operate without clear boundaries, auditability, or risk controls. The Replit database deletion was not just a technical failure but a governance failure. The system had no meaningful constraints on what it could modify, no approval workflows for destructive actions, and no audit trail.

7. Overestimating Models While Underinvesting in Data

Nearly two-thirds of CEOs invest in AI technologies before understanding the value they bring, according to the IBM CEO survey. They focus on model selection while treating data preparation as a minor implementation detail.

Powerful models operating on fragmented data, across disconnected systems, without clear guardrails make failure predictable.

These seven issues converge on a single conclusion: AI fails when deployed without structural foundations required for safe, scaled operation.

How Do You Build Production-Ready AI Infrastructure for MSPs?

Production-ready AI depends on solving foundational infrastructure problems as core architectural decisions, not afterthoughts. Only about 15% of organizations have achieved enterprise-wide AI deployment according to MIT’s NANDA report. The gap is infrastructure, not capability.

Structured Ingestion of Unstructured Data

The 80% of business value locked in unstructured data requires a different approach than traditional RAG. Standard systems hit a wall because they cannot fit all relevant text chunks into a model’s context window. One document might work. A thousand breaks it.

Transform unstructured data into structured databases automatically during ingestion. Instead of feeding raw text into models, convert PDFs, emails, and transcripts into queryable database tables. When someone asks an aggregation question, the system runs a SQL query and injects only results into the context, not the entire corpus.

Synthreo’s RAG 2.0 engine works this way. Token consumption drops dramatically. Speed improves. Accuracy increases because the system performs actual computations on structured data rather than reasoning about scattered text chunks. Every answer includes citations linking back to source documents.

Automatic Entity Resolution Across Systems

Manual entity resolution does not scale. Traditional master data management projects consistently fail in SMB environments. Production systems need machine learning models that recognize and unify duplicate entities automatically as part of the ingestion process.

On Synthreo’s agentic AI platform, entity resolution happens automatically as data flows into the knowledge graph. The system identifies that “Acme Corp” in your CRM and “ACME Inc.” in contracts are the same organization, then merges them without manual mapping. The knowledge graph gets smarter with every interaction.

Cross-System Workflow Orchestration

Production AI needs to operate across existing tools, not replace them. Most MSP environments include PSA, RMM, documentation, finance, and client-specific systems. AI working in isolation delivers limited value. AI orchestrating workflows across platforms becomes operationally critical.

Synthreo’s Builder tool integrates with existing MSP stacks, allowing agents to pull context from IT Glue, update tickets in ConnectWise, and generate QBR content from multiple systems without replacing tools or requiring extensive custom development.

Built-In Guardrails and Auditability

Autonomous actions without boundaries create liability. Organizations need defined boundaries, approval workflows, and complete audit trails. Guardrails cannot be bolted on after deployment. They must be architectural.

Synthreo’s Access Rules Component provides granular control over what data each agent can access. Audit logs capture every action. Least privilege principles ensure agents only touch what they explicitly need. Critical actions require human approval before execution. Security and compliance controls are fundamental to how the platform operates.

Zero Data Retention by Default

Data privacy fears paralyze AI adoption. Clients worry their sensitive information will train public models or leak to competitors. Production systems need verifiable guarantees: data will not leave the controlled environment, will not train external models, and will not be retained beyond immediate operational needs.

Synthreo uses a two-layer protection model. RAG 2.0 sends only relevant, structured data to models, not entire documents. The platform uses Azure Foundry’s Zero Data Retention models by default. Even filtered data is never stored or used for training.

Pre-Built Workflows for Immediate Value

Most AI projects stall in proof-of-concept because platforms treat every use case as a greenfield engineering problem. Production-ready systems need templates for common workflows that deliver value on day one.

Synthreo ships with production-ready agent templates: intelligent ticket triage, QBR generators, invoice reconciliation, HR assistants, and project pulse systems. These work immediately without custom development or six-month implementation cycles.

Why Should MSPs Care About AI Infrastructure Before Deploying Agents?

Your clients want systems that work reliably inside their existing environments without creating new liability risks. You own the outcome regardless of which vendor’s technology you chose.

Consider the stakes. You are liable when you deploy an AI agent that provides incorrect information. Air Canada’s tribunal ruling proved companies cannot disclaim responsibility for their AI outputs. You are explaining why client data disappeared when automation makes unauthorized changes to production systems. You are managing the breach response when privacy controls fail.

Reliable AI changes the picture. Accurate ticket triage, meaningful QBR insights, automated invoice reconciliation within clear boundaries deliver measurable value, strengthen client relationships, and create recurring revenue.

MSPs that succeed in AI will deliver reliability, control, and measurable outcomes, not chase the latest models or deploy the most agents. Dependable intelligence that clients trust in production.

MSPs building AI practices on this foundation gain credibility and competitive advantage. Those relying on fragile tools or poorly governed automation risk eroding both trust and margins.

How Do MSPs Move from AI Pilots to Reliable Production Deployments?

AI has reached an inflection point. The 95% failure rate is not inevitable. It is a symptom of deploying powerful technology without the infrastructure to support it.

Evidence is consistent. AI initiatives succeed when built on strong foundations: clean data ingestion handling unstructured sources, automatic entity resolution unifying fragmented context, cross-system integration respecting existing workflows, built-in guardrails with auditability, and verifiable retrieval eliminating hallucinations.

MSP industry advisor Arlin Sorensen needed to make 57,000 documents spanning 20+ years searchable and actionable. Synthreo’s RAG 2.0 engine processed the entire archive without requiring data remediation. Sub-2-second retrieval times. Zero factual hallucinations. Citations for every answer. The system worked with the data as it existed.

That is the standard production AI should meet: reliable operation inside messy, real-world environments, not impressive demos. The market needs systems that work consistently when exposed to the complexity of actual business operations, not more AI experiments.

Schedule a conversation and see how MSPs are building reliable AI systems their clients trust.

Frequently Asked Questions About AI Deployment Failures for MSPs

Q: Why do AI deployments fail?

According to MIT’s July 2025 NANDA report, most AI pilots fail due to infrastructure problems, not model limitations. The seven primary causes are poor data quality, inability to process unstructured data, lack of entity resolution, integration complexity across siloed systems, missing documentation, insufficient guardrails, and underinvestment in data preparation.

Q: What is the difference between an AI pilot and a production deployment?

Pilots run in controlled environments with clean data and manual oversight. Production means AI operates across real workflows with messy data, fragmented systems, and autonomous decision-making. Carnegie Mellon’s TheAgentCompany benchmark found even the best AI agent completed only 24% of realistic office tasks.

Q: How do you process unstructured data at scale without expensive remediation?

Turn unstructured data into structured databases automatically during ingestion. Instead of feeding raw text chunks into AI models, convert PDFs, emails, and transcripts into queryable tables. Synthreo’s RAG 2.0 engine processed 57,000 documents for MSP advisor Arlin Sorensen with zero data remediation required.

Q: How do you solve entity resolution when the same customer appears differently across every system?

Production systems use machine learning models that recognize and unify duplicate entities automatically during ingestion. On Synthreo’s platform, entity resolution builds a consistent view across CRM, email, contracts, and tickets without manual mapping or expensive MDM projects.

Q: How can MSPs deploy AI without creating liability risks?

Implement AI with the same rigor as other critical infrastructure: defined boundaries, human approval for critical actions, complete audit trails, zero data retention policies. The Air Canada chatbot ruling established that companies remain liable for AI outputs. Guardrails must be architectural, not afterthoughts.

Q: What about data privacy concerns blocking AI adoption?

Use a two-layer protection model. First, send only relevant structured data to models, not entire documents. Second, use zero data retention models where even filtered data is never stored or used for training. This architecture passes compliance review where standard platforms cannot.

The Synthreo Team builds the definitive agentic AI platform for MSPs. Synthreo’s RAG 2.0 engine, cross-system integration, and production-grade guardrails help MSPs deploy AI that works reliably in real-world client environments.